Machines and robots undoubtedly make life easier. They carry out jobs with precision and speed, and, unlike humans, they do not require breaks as they are never tired. As a result, companies are looking to use them more and more in their manufacturing processes to improve productivity and remove dirty, dangerous, and dull tasks.

However, there are still so many tasks in the working environment that require human dexterity, adaptability, and flexibility. Human-robot collaboration is an exciting opportunity for future manufacturing since it combines the best of both worlds. The relationship requires close interaction between humans and robots, which could highly profit from anticipating a collaborative partner’s next action.

A team of U.S. researchers have published promising results for ‘training’ robots to detect arm movement intention before humans articulate the movements in the Robotics and Computer-Integrated Manufacturing journal.

One of the researchers said that a robot’s speed and torque need to be coordinated well because it can pose a serious threat to human health and safety. Ideally, for effective teamwork, the human and robot would understand each other, which is difficult due to both being quite different and speaking different languages. The researchers propose to give the robot the ability to read its human partners intentions.

The researchers looked to achieve this by interfacing the frontal lobe activity of the human brain. Every movement performed by the human body is analysed and evaluated in the brain before its execution. Measuring this signal can help to communicate an intention to move to a robot. However, brains are highly complex organs, and detecting the pre-movement signal is challenging.

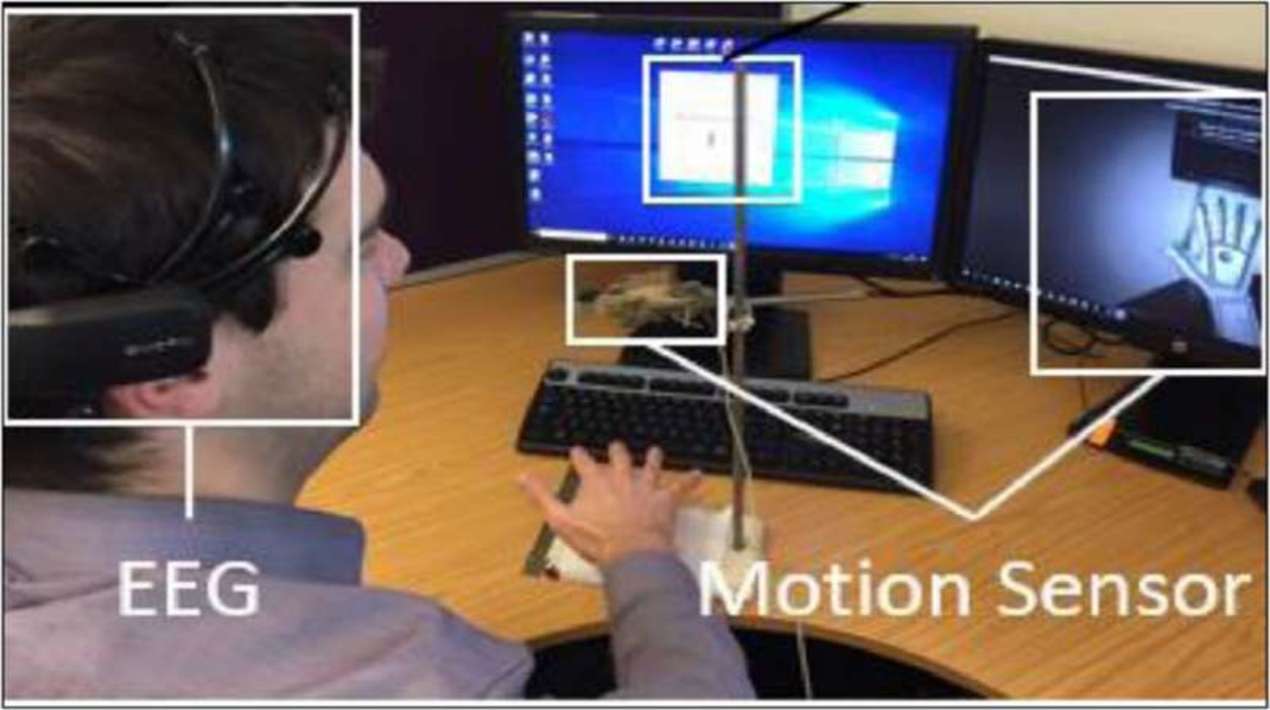

The researchers tackled this challenge by training an Artificial Intelligence (AI) system to recognise the pre-movement patterns from an electroencephalogram (EEG) – a piece of technology that allows human brain activity to be recorded. Their latest paper reports the findings of a test carried out with eight participants.

The participants had to sit in front of a computer that randomly generated a letter from A-Z on the screen and press the key on the keyboard that matched the letter. The AI system had to predict which arm the participants would move from the EEG data and this intention was confirmed by motion sensors. The experimental data shows that the AI system can detect when a human is about to move an arm up to 513 milliseconds (ms) before they move, and on average, around 300ms before actual execution.

In a simulation, the researchers tested the impact of the time advantage for a human-robot collaborative scenario. They found they could achieve higher productivity for the same task using the technology as opposed to without it. The completion time for the task was 8-11% faster—even when the researchers included false positives, which involved the EEG wrongly communicating a person’s intention to move to the robot.

The researchers plan to build on this research and hopes to eventually create a system that can predict where movement is directed. Of the latest findings, they hope this study will achieve two things: first, this proposed technology could help towards a closer, symbiotic human-robot collaboration, which still requires a large amount of research and engineering work to be fully established.

Secondly, they hope to communicate that rather than seeing robots and AI/machine learning as a threat to human labour in manufacturing, it could also be seen as an opportunity to keep the human as an essential part of the factory of the future.

U.S. Researchers have been developing a variety of technologies to help people with disabilities and diseases. As reported by OpenGov Asia, A new robotic neck brace from U.S. researchers may help doctors analyse the impact of cancer treatments on the neck mobility of patients and guide their recovery.