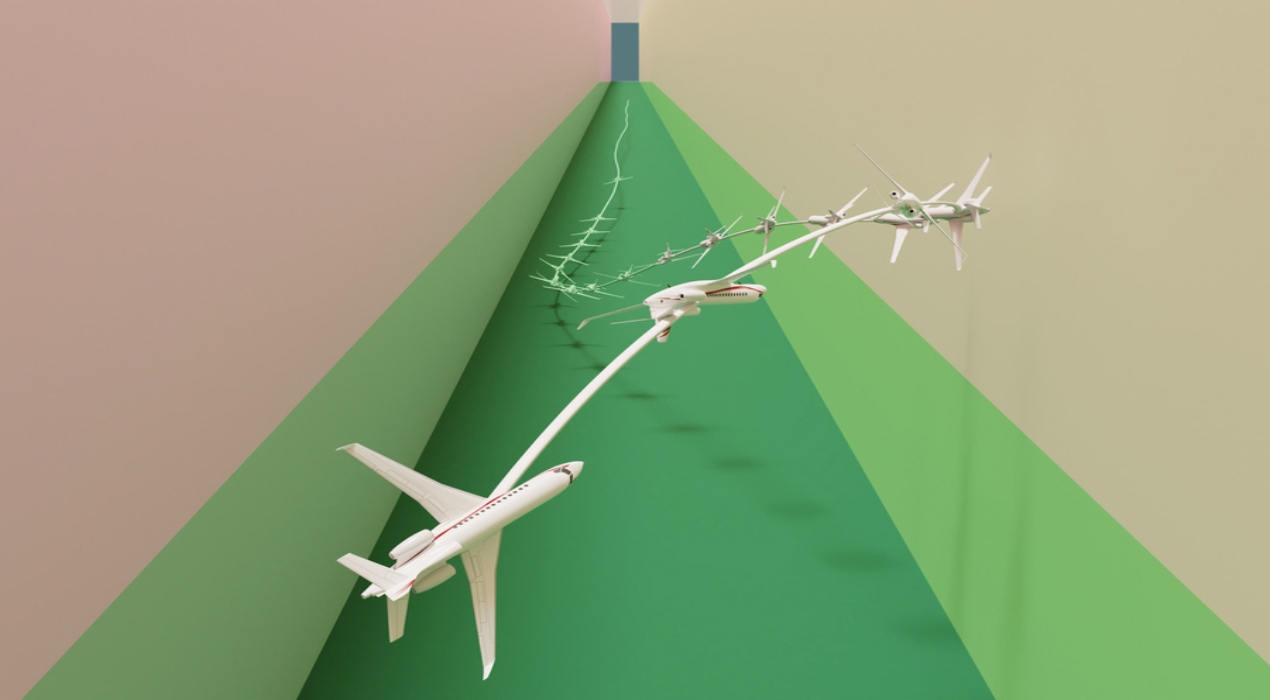

Researchers at MIT have created an innovative method capable of resolving intricate challenges concerning aircraft stability and evasion, ensuring they steer clear of canyon walls and remain undetected. By employing a machine learning approach inspired by the movie Maverick, their technique not only matches or surpasses the safety standards set by current methods but also enhances stability by ten times. As a result, the aircraft agent can achieve and sustain stability within its intended area.

Their groundbreaking method successfully guided a virtual fighter jet through a narrow passage, showcasing a remarkable achievement akin to Maverick’s feats. Overcoming a longstanding and complex challenge, the technique impressed even the experts who previously struggled with high-dimensional dynamics.

Chuchu Fan, the Wilson Assistant Professor of Aeronautics and Astronautics and a member of the Laboratory for Information and Decision Systems (LIDS) expressed their satisfaction as the senior author of the research paper documenting this remarkable technique.

Numerous approaches attempt to address the challenges of complex stabilise-avoid problems by simplifying the system to solve them using straightforward mathematical techniques. More effective methods utilise reinforcement learning, a machine learning approach where an agent learns through trial and error, with rewards given for behaviours that bring it closer to the desired goal.

However, in the context of stabilise-avoid problems, there are two distinct objectives to consider: maintaining stability and avoiding obstacles. Striking the right balance between these objectives can be a tedious task.

The researchers at MIT took a different approach by breaking down the problem into two steps. Firstly, they redefined the stabilise-avoid problem as a constrained optimisation problem. It will help the agent to reach its goal and achieve stability within a specific region. To ensure obstacle avoidance, the researchers applied constraints.

Subsequently, for the second step, the researchers transformed the constrained optimisation problem into a mathematical formulation called the epigraph form. It allowed them to utilise a deep reinforcement learning algorithm to solve it, overcoming the challenges encountered by other methods when using reinforcement learning.

However, deep reinforcement learning is not inherently designed to handle the epigraph form of an optimisation problem. Therefore, the researchers had to derive new mathematical expressions, for instance, tailored to their system. Once these derivations were obtained, they combined them with established engineering techniques employed by other methods to enhance their approach.

The controller developed by MIT researchers demonstrated exceptional performance, surpassing the baselines by effectively preventing the jet from crashing or stalling while achieving stable alignment with the desired goal.

In the future, this technique holds promise as a foundation for designing controllers for highly dynamic robots that require safety and stability compliance, such as autonomous delivery drones. It also could be integrated as part of a broader system, such as activating the algorithm when a car skids on a snowy road to assist the driver in safely returning to a stable trajectory.

The researchers’ approach excels, particularly in navigating extreme scenarios that would pose challenges for human operators. So highlights the importance of providing reinforcement learning with safety and stability guarantees when deploying these controllers in mission-critical systems. He views their work as a promising initial step toward achieving this goal.

Moving forward, the researchers aim to enhance their technique by incorporating better handling of uncertainty during the optimisation process. They also plan to investigate the performance of the algorithm when deployed on physical hardware, considering the discrepancies that may arise between the model’s dynamics and the real-world dynamics.

Stanley Bak, an assistant professor at Stony Brook University, highlights the significance of their refined formulation, which enables the generation of secure controllers for complex scenarios, including a nonlinear jet aircraft model developed in collaboration with researchers from the Air Force Research Lab (AFRL).