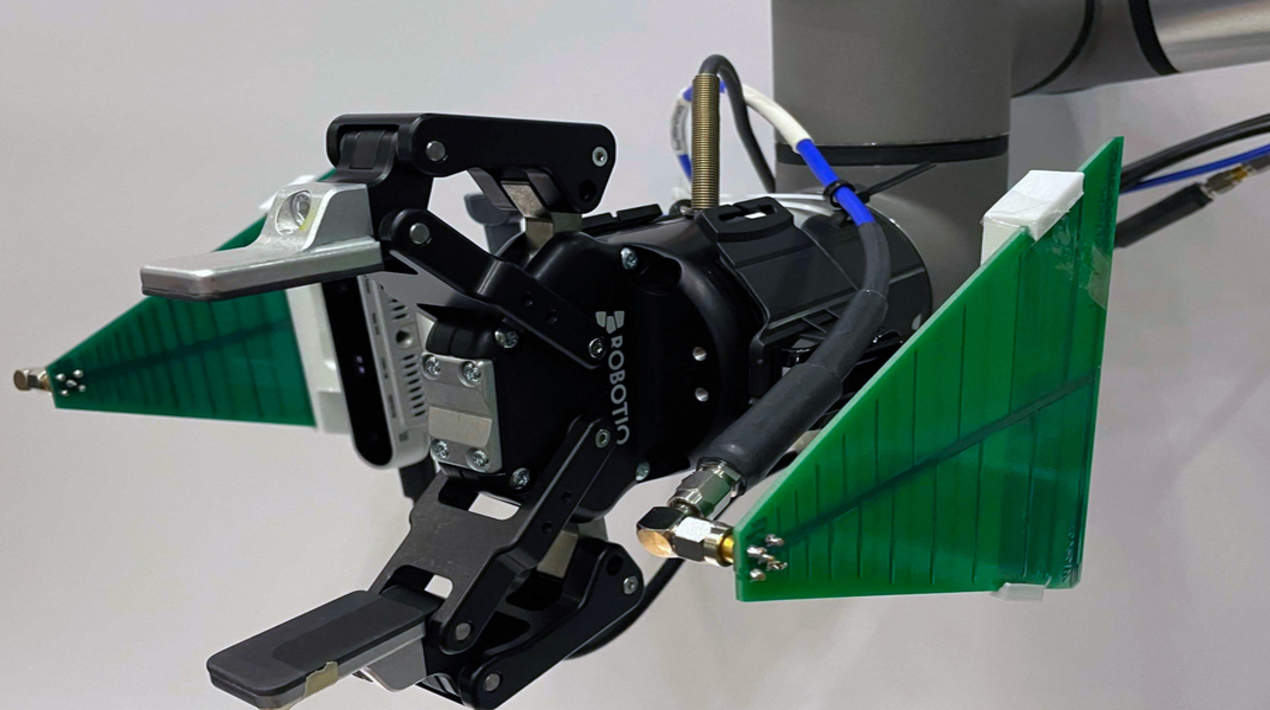

Researchers at MIT have created a robotic system that can search through piles of stuff and retrieve objects. The system, RFusion, is a robotic arm with a camera and radio frequency (RF) antenna attached to its gripper. It fuses signals from the antenna with visual input from the camera to locate and retrieve an item, even if the item is buried under a pile and completely out of view.

The prototype the researchers developed relies on RFID tags, which are cheap, battery-less tags that can be stuck to an item and reflect signals sent by an antenna. Because RF signals can travel through most surfaces, the system can locate a tagged item within a pile.

The robotic arm automatically zeroes-in on the object’s exact location, moves the items on top of it, grasps the object, and verifies that it picked up the right thing by using machine learning. The camera, antenna, robotic arm, and AI are fully integrated, so the system can work in any environment without requiring a special set-up.

While finding lost keys is helpful, the system could have many broader applications in the future, like sorting through piles to fulfil orders in a warehouse, identifying and installing components in an auto manufacturing plant, or helping an elderly individual perform daily tasks in the home. However, the current prototype is not quite fast enough yet for these uses.

This idea of being able to find items in a chaotic world is an open problem that we’ve been working on for a few years. Having robots that can search for things under a pile is a growing need in the industry today. In the near term, this could have a lot of applications in manufacturing and warehouse environments

– Fadel Adib, Senior author

The system begins searching for an object using its antenna, which bounces signals off the RFID tag to identify a spherical area in which the tag is located. It combines that sphere with the camera input, which narrows down the object’s location. For instance, the item can’t be located on an area of a table that is empty. Once the robot has a general idea of where the item is, it would need to swing its arm widely around the room taking additional measurements to come up with the exact location, which is slow and inefficient.

The researchers used reinforcement learning to train a neural network that can optimise the robot’s trajectory to the object. In reinforcement learning, the algorithm is trained through trial and error with a reward system. The optimisation algorithm was rewarded when it limited the number of moves it had to make to localise the item and the distance it had to travel to pick it up.

Once the system identifies the exact right spot, the neural network uses combined RF and visual information to predict how the robotic arm should grasp the object, including the angle of the hand and the width of the gripper, and whether it must remove other items first. It also scans the item’s tag one last time to make sure it picked up the right object.

In the future, the researchers hope to increase the speed of the system so it can move smoothly, rather than stopping periodically to take measurements. This would enable the system to be deployed in a fast-paced manufacturing or warehouse setting. Beyond its potential industrial uses, a system like this could even be incorporated into future smart homes to assist people with any number of household tasks